Large Language Models (LLMs) are increasingly embedded in production systems that power customer support, search, analytics, content generation, and mission-critical automation. As organizations depend on these systems for real-time decisions and responses, uptime is no longer optional. Even brief outages can interrupt business workflows, degrade customer trust, and create measurable revenue loss. Ensuring high availability therefore requires more than just reliable infrastructure—it demands resilient, well-architected failover strategies specifically tailored to LLM workloads.

TLDR: High availability for LLM systems requires deliberate failover strategies that prevent downtime during outages, latency spikes, or provider disruptions. Organizations should combine multi-provider routing, regional redundancy, fallback models, circuit breakers, queue-based buffering, and graceful degradation techniques. No single method is sufficient on its own—robust systems layer these mechanisms together. Planning for failure is the only reliable way to achieve production-grade resilience.

Below are six proven LLM failover systems that consistently improve availability in production environments.

Table of Contents

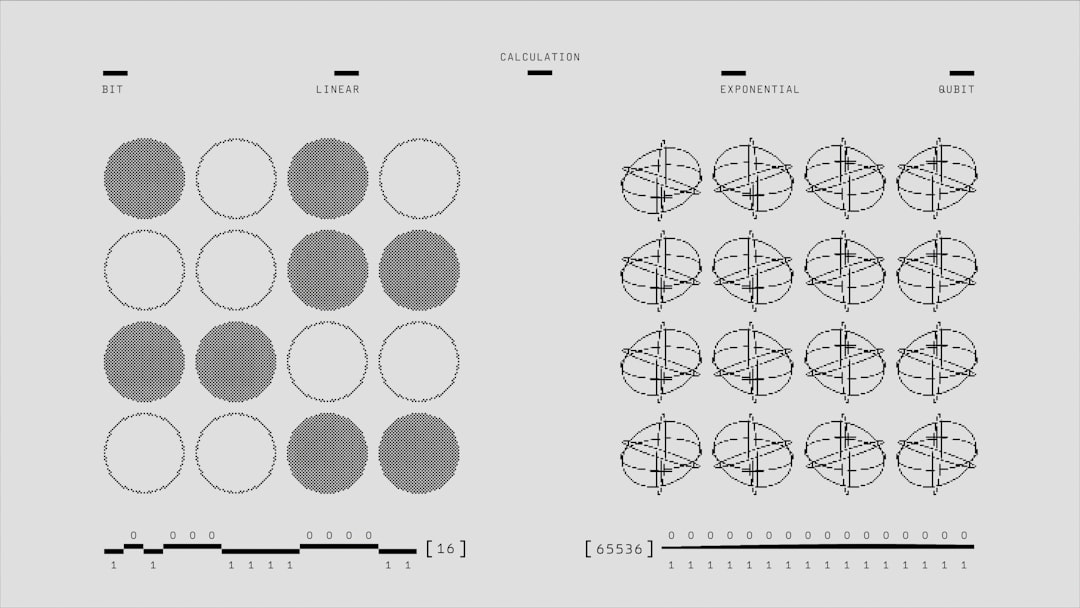

1. Multi-Provider Active-Active Routing

One of the most effective ways to mitigate provider-level outages is to deploy multi-provider active-active routing. Instead of relying on a single model vendor, traffic is dynamically distributed across two or more providers.

This approach offers several advantages:

- Fault isolation: If Provider A experiences downtime, traffic shifts to Provider B.

- Latency optimization: Requests can be routed to the fastest responding endpoint.

- Cost balancing: Traffic can be weighted toward more economical providers during peak periods.

In practice, a smart routing gateway evaluates health checks, latency metrics, and error rates in real time. If thresholds are exceeded, traffic shifts automatically without user-visible interruption. Advanced systems apply weighted distribution to continuously test backup providers, ensuring they remain “warm” and validated.

Key consideration: Model behavior may vary between providers. Robust validation, structured outputs, and output normalization layers help maintain consistency.

2. Cross-Region Deployment Redundancy

Even when using a single provider, geographic outages can disrupt service availability. Natural disasters, fiber cuts, or data center power failures can isolate entire regions.

Cross-region redundancy ensures that LLM endpoints are replicated across multiple geographic locations. Traffic is routed based on region health status and latency.

Typical implementation includes:

- Primary region handling the majority of traffic

- Secondary region on hot standby

- Automated DNS or load balancer-based failover

This configuration reduces exposure to localized outages and ensures business continuity. Mission-critical applications often combine this with active-active models across multiple continents.

Best practice: Conduct routine failover simulations. Many outages escalate because the backup region has not been recently tested under production load.

3. Tiered Model Fallback Strategy

Not all requests require the most advanced LLM available. A tiered fallback architecture prioritizes continuity of service over model sophistication.

For example:

- Primary: High-capability flagship model

- Secondary: Smaller, faster general-purpose model

- Tertiary: Lightweight rule-based or templated fallback

If the primary model times out or returns elevated error rates, the system automatically retries with the secondary model. If capacity remains constrained, the system degrades further while still delivering usable output.

This ensures that users receive functional responses rather than complete failures. In customer-facing environments, a slightly simpler response is far preferable to an error message.

Operational insight: Maintain compatible prompt structures across tiers to reduce inconsistencies. Standardized schema outputs significantly reduce downstream disruptions during failover events.

4. Circuit Breakers and Intelligent Retry Logic

Failover is not just about switching providers—it’s about preventing cascading failure. Circuit breaker patterns detect when an upstream system exceeds failure thresholds and temporarily halt traffic to prevent overload.

Without circuit breakers, retry storms can occur. When thousands of requests retry simultaneously, they can worsen congestion and extend outages.

Effective implementation includes:

- Error rate monitoring with sliding windows

- Automatic traffic suspension when thresholds are exceeded

- Exponential backoff retry mechanisms

- Gradual traffic reintroduction after recovery

By isolating failure quickly, circuit breakers preserve overall system stability. This pattern is especially critical for LLM APIs with shared rate limits and dynamic throughput caps.

Important: Retry logic should be bounded. Infinite retries can saturate infrastructure and increase costs uncontrollably.

5. Queue-Based Buffering and Asynchronous Processing

Real-time systems are particularly vulnerable to short bursts of downtime. Incorporating queue-based buffering provides a protective layer between the application and the LLM provider.

Instead of directly processing each request, the system pushes tasks into a durable message queue. Worker services consume tasks at a controlled rate.

This architecture provides:

- Traffic smoothing during spikes

- Temporary storage during upstream degradation

- Improved resilience against transient failures

If an LLM endpoint becomes unavailable, workers pause consumption but the queue preserves incoming tasks. Once service stabilizes, processing resumes without data loss.

Design consideration: Define maximum acceptable queue latency. Certain applications—such as compliance checks or conversational chat—may require time-based expiry rules.

6. Graceful Degradation and Feature Flag Control

High availability does not always mean maintaining full functionality. In many cases, graceful degradation provides a controlled reduction of capability while preserving core service.

This approach is especially valuable when LLM workloads are embedded across multiple features within a product. If one subsystem fails, others can remain operational.

Examples include:

- Temporarily disabling background content suggestions

- Returning cached responses for common queries

- Switching to extractive summaries instead of generative ones

- Limiting conversation length during high load

Feature flags allow rapid toggling of dependent services without redeploying code. When integrated with monitoring systems, automated policies can disable non-essential LLM features during instability.

This strategy prioritizes core user workflows while preventing total system failure.

Additional Architectural Considerations

Beyond selecting specific failover systems, production reliability depends on disciplined operational practices.

Monitoring and observability should include:

- Latency distribution tracking

- Error rate monitoring by provider and region

- Token consumption analysis

- Cost anomaly detection

Chaos engineering can also be used to intentionally simulate provider outages or regional disruptions. These controlled experiments validate assumptions long before real failures occur.

Finally, logging and structured output validation mechanisms help detect silent degradation—cases where responses technically succeed but quality materially declines.

Designing for Layered Resilience

No individual failover system guarantees uninterrupted service. The most robust architectures combine multiple strategies into a layered resilience model.

A mature production setup might include:

- Multi-provider routing (System 1)

- Cross-region redundancy (System 2)

- Tiered fallback models (System 3)

- Circuit breakers and bounded retries (System 4)

- Queue-based buffering (System 5)

- Graceful degradation policies (System 6)

This layered design ensures that failure at one level does not propagate uncontrollably through the system. Each layer isolates risk and maintains continuity.

Conclusion

As LLMs become embedded in business-critical workflows, availability targets are rising to match traditional enterprise reliability standards. Outages are inevitable—whether due to provider interruptions, network latency, quota exhaustion, or regional failures. The difference between disruption and continuity lies in preparation.

Organizations that deploy multi-layered failover systems can continue operating even during adverse conditions. Those that do not risk degraded service, customer dissatisfaction, and operational instability.

High availability is not accidental. It is engineered, tested, layered, and continuously refined. In production LLM environments, failover systems are not optional safeguards—they are foundational infrastructure.