As artificial intelligence systems become increasingly embedded in critical business processes, the need for controlled, secure environments to test models and workflows has never been greater. Organizations are deploying AI for decision-making in finance, healthcare, logistics, customer service, and cybersecurity—contexts where failure can be costly or even dangerous. AI sandbox environments provide a structured way to experiment, validate, and stress-test systems before they are exposed to real users or live data. Properly designed sandboxes reduce risk, improve compliance, and build trust in AI-driven outcomes.

TLDR: AI sandbox environments are controlled testing spaces where models and workflows can be evaluated safely before deployment. They reduce operational, legal, and reputational risk by isolating experiments from real-world consequences. Effective sandboxes combine data governance, security controls, monitoring, and simulation tools. Organizations that invest in sandboxing gain higher reliability, better compliance, and more trustworthy AI systems.

Table of Contents

What Is an AI Sandbox Environment?

An AI sandbox environment is a segregated, tightly controlled infrastructure where artificial intelligence models and related workflows can be trained, tested, evaluated, and stress-tested without affecting production systems. Unlike general development environments, sandboxes are specifically engineered to contain risk. They limit data access, enforce policy controls, and monitor system behavior under defined conditions.

In practical terms, a sandbox may include:

- Replicated but anonymized or synthetic datasets

- Isolated compute environments

- Predefined access permissions and audit logs

- Simulation tools that replicate real-world scenarios

- Automated testing pipelines

These controls enable teams to examine how models behave under edge cases, adversarial conditions, and unexpected data inputs—without exposing customers, operations, or sensitive infrastructure to harm.

Why AI Systems Require Controlled Testing Environments

AI systems differ from traditional software because they are probabilistic rather than deterministic. Their outputs depend heavily on training data, fine-tuning methods, and contextual inputs. Even small changes in data distribution can produce unexpected results.

Without safe testing environments, organizations face several risks:

- Data leakage: Improperly handled real-world data may violate privacy laws.

- Model bias: Unexamined biases can lead to discriminatory outcomes.

- Operational disruption: Faulty models may cause system outages or incorrect decisions.

- Security exploitation: Malicious actors may manipulate model inputs.

- Compliance failures: Regulatory frameworks increasingly demand validation documentation.

AI sandboxes serve as a containment mechanism. They create a controlled experimental boundary where failure is expected, observed, and corrected before public exposure.

Core Components of a Robust AI Sandbox

Building a credible sandbox environment requires more than simply duplicating infrastructure. It demands deliberate architectural and governance design.

1. Isolated Infrastructure

Network segmentation ensures that testing activities remain separate from production systems. Access control mechanisms such as identity-based permissions and role-based access control (RBAC) restrict who can alter models or datasets.

Isolation prevents unintended propagation of configuration changes or corrupted outputs into live services. It also makes it easier to conduct forensic analysis if an experiment produces harmful outcomes.

2. Synthetic and Masked Data

Realistic but anonymized datasets are central to meaningful testing. Many organizations generate synthetic data that mimics statistical properties of real user information without compromising privacy.

Benefits include:

- Reduced exposure to personally identifiable information (PII)

- Freedom to test edge cases aggressively

- Simplified compliance audits

Where production data must be used, masking and tokenization techniques reduce legal and ethical exposure.

3. Monitoring and Observability

Sandbox environments require comprehensive logging of:

- Model inputs and outputs

- Version changes

- Hyperparameter adjustments

- System performance metrics

Observability ensures that experimental findings are documented and reproducible. This documentation is essential for regulatory review and internal governance committees.

4. Automated Testing Frameworks

Just as traditional software uses automated testing suites, AI sandboxes incorporate structured validation procedures such as:

- Bias detection testing

- Drift analysis simulations

- Adversarial robustness checks

- Performance benchmarking under load

Automation ensures consistent validation across iterations and accelerates responsible deployment timelines.

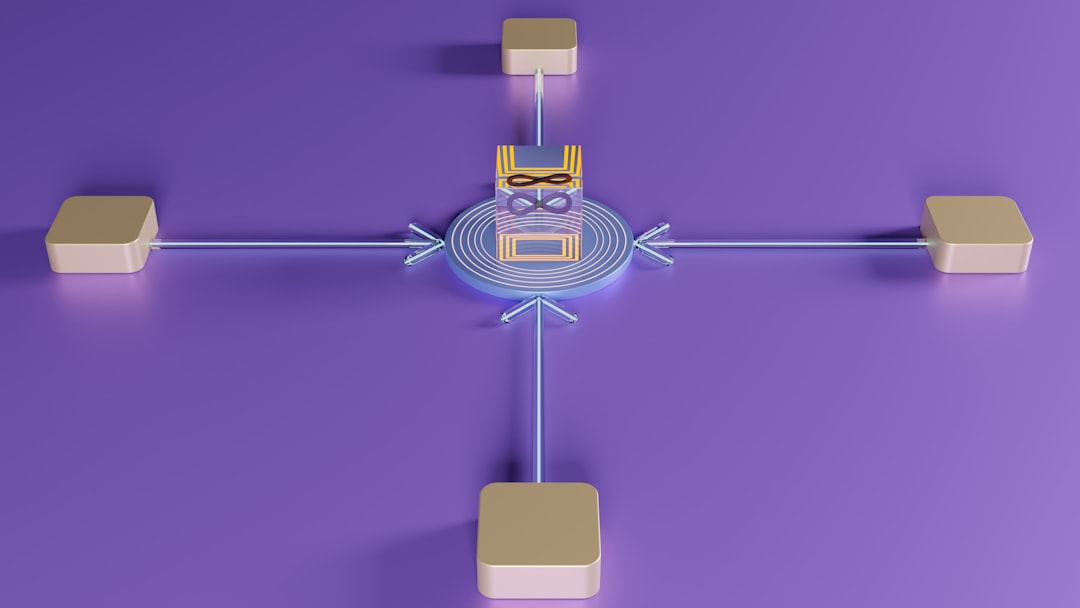

Testing AI Workflows, Not Just Models

Modern AI applications rarely operate as standalone models. They exist within broader workflows that integrate APIs, external databases, human review systems, and automated decision engines. Testing individual models without evaluating the full workflow can create blind spots.

In a sandbox environment, organizations can simulate:

- End-to-end approval processes

- Customer query handling scenarios

- Fraud detection escalation paths

- Human-in-the-loop validation procedures

Testing workflows reveals cascading risks. For example, a moderately inaccurate model may become highly problematic if downstream automation amplifies its outputs without oversight.

Regulatory and Compliance Alignment

Governments worldwide are introducing regulations addressing AI transparency, accountability, and safety. Regulatory frameworks often require:

- Documented testing procedures

- Risk assessment reporting

- Explainability validation

- Human oversight mechanisms

AI sandboxes facilitate these requirements by creating a documented, repeatable testing environment. Structured experimentation provides evidence that due diligence occurred prior to deployment.

In heavily regulated industries such as finance and healthcare, sandbox validation may serve as a prerequisite before production release. Auditors often examine test logs, validation criteria, and model change histories.

Security Considerations in AI Sandboxes

While sandboxes are designed to reduce risk, they can themselves become targets if improperly secured. Strong security practices include:

- Zero-trust architecture: Every access request is authenticated and verified.

- Encrypted data storage: Both at rest and in transit.

- Strict credential management: Short-lived tokens and multifactor authentication.

- Red team exercises: Simulated attacks to probe vulnerabilities.

Adversarial testing is especially important for AI systems that process external input. Attackers may attempt prompt injection, data poisoning, or model inversion attacks. A sandbox provides a safe arena to evaluate defensive resilience.

Benefits Beyond Risk Reduction

Although safety is the primary purpose of AI sandbox environments, organizations often discover additional advantages:

- Faster experimentation: Teams can innovate without fear of immediate production impact.

- Cross-team collaboration: Data scientists, engineers, and compliance officers work within a shared test framework.

- Improved documentation discipline: Structured testing promotes governance maturity.

- Higher deployment confidence: Stakeholders gain measurable performance insights.

By lowering the cost of failure, sandboxes paradoxically accelerate responsible innovation.

Challenges in Implementing AI Sandbox Environments

Despite their advantages, sandbox environments require careful resource planning. Common challenges include:

- Infrastructure duplication costs

- Maintaining dataset freshness

- Ensuring experimental results generalize to production conditions

- Managing version control complexity

Organizations must also ensure that sandboxes do not become static environments that fail to reflect evolving production realities. Continuous synchronization—without compromising isolation—is key.

Best Practices for Responsible Sandbox Governance

Effective sandbox strategies combine technical and managerial oversight. Recommended best practices include:

- Define clear experimentation boundaries. Document what may and may not be tested.

- Implement formal model promotion pathways. Move systems from sandbox to staging to production through predefined approval processes.

- Maintain version traceability. Ensure every deployment references specific validated test results.

- Conduct periodic audits. Verify that sandbox controls remain operational and relevant.

- Integrate ethical review checkpoints. Assess fairness and societal impact before approval.

Governance transforms sandboxing from a technical utility into a cornerstone of enterprise AI strategy.

The Strategic Role of AI Sandboxing

As AI adoption expands, public trust increasingly depends on visible evidence of safety precautions. Organizations that can demonstrate structured testing environments distinguish themselves from competitors that rely on informal experimentation.

AI sandbox environments signal maturity. They reflect an understanding that advanced automation must be accompanied by disciplined oversight. Instead of treating AI as an experimental novelty, sandboxing treats it as mission-critical infrastructure deserving of rigorous validation.

In the coming years, sandbox environments are likely to become standard components of AI lifecycle management platforms. Integration with model registries, monitoring dashboards, and compliance reporting tools will make sandbox testing a continuous and automated process rather than a one-time checkpoint.

Conclusion

AI sandbox environments provide the structural safeguards necessary for responsible artificial intelligence deployment. By isolating experiments, controlling data exposure, and formalizing validation procedures, they reduce operational, legal, and reputational risks. More importantly, they foster disciplined innovation—allowing organizations to test boldly while protecting stakeholders.

In high-stakes environments, the question is no longer whether AI should be tested in isolation, but how rigorously that isolation is designed. A thoughtfully constructed sandbox is not merely a protective layer; it is a foundation for trustworthy, sustainable AI transformation.