The internet feels fast. Until it doesn’t. A slow-loading page, a buffering video, or a laggy checkout can drive users away in seconds. That’s where edge computing steps in. Instead of sending data across the world to a central server, edge platforms process it closer to the user. The result? Faster responses. Happier visitors. More conversions.

TLDR: Edge computing platforms move your code closer to users, reducing latency by up to 60%. They run applications on global networks of servers instead of one central location. This means faster load times, smoother apps, and better user experiences. Platforms like Fastly Compute, AWS Lambda@Edge, Vercel Edge Functions, and Akamai EdgeWorkers are leading the charge.

Let’s break it down in a fun and simple way.

Table of Contents

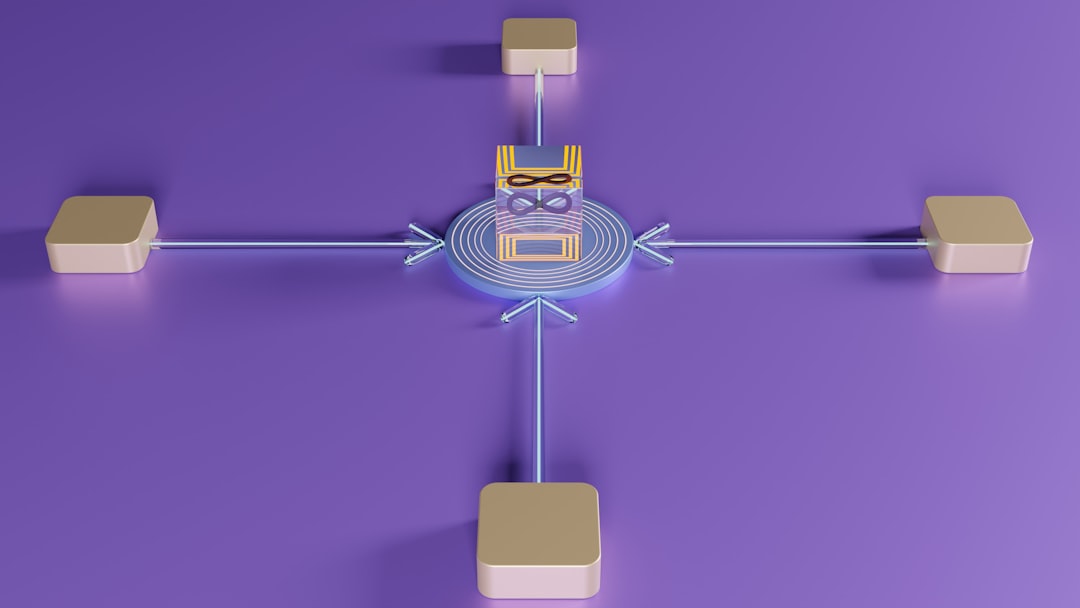

What Is Edge Computing?

Imagine ordering coffee.

If your barista is in your kitchen, you get coffee fast. If your barista is in another country, you wait. A long time.

Traditional cloud computing is like that faraway barista. Your data travels miles to a central data center. Then it travels back.

Edge computing moves the barista closer to you.

Instead of one big center, there are many small ones spread across the globe. These are called edge locations. They sit near users. So data travels shorter distances. That reduces latency.

Latency is the delay before a transfer of data begins. Lower latency means less waiting.

In many real-world cases, moving to the edge reduces latency by up to 60%. Sometimes even more.

Why Latency Matters So Much

Milliseconds matter online.

- 100 milliseconds of delay can hurt conversion rates.

- 1 second of delay can reduce customer satisfaction.

- 3 seconds of delay can make users leave entirely.

Think about:

- Online games

- Video streaming

- Financial trading apps

- E-commerce checkout pages

- AI-powered chat tools

All of them need speed. Real-time speed.

Edge computing helps by:

- Reducing physical distance

- Handling requests locally

- Caching smartly

- Running lightweight code instantly

Now let’s look at four big players besides Cloudflare Workers that are making this happen.

1. Fastly Compute

Fastly is known for speed. It powers large media sites and streaming platforms.

Fastly Compute allows developers to run custom code directly at the edge.

Here’s why it stands out:

- Ultra-low latency performance

- Designed for high-traffic apps

- Strong real-time caching controls

- Written in modern languages like Rust

Fastly’s architecture is built for instant content delivery. Instead of sending requests back to a central server, Fastly processes logic at the nearest edge node.

This means:

- Faster content personalization

- Quicker API responses

- Improved streaming speeds

Companies using Fastly often report noticeable drops in load times. Especially for global audiences.

If your users are spread across continents, Fastly keeps things smooth.

2. AWS Lambda@Edge

Amazon Web Services is massive. And Lambda@Edge brings its power closer to users.

Lambda@Edge extends AWS Lambda functions to Amazon CloudFront edge locations around the world.

In simple terms? You can run serverless code near your users without managing servers.

What makes it powerful:

- Deep integration with AWS ecosystem

- Automatic scaling

- Event-driven architecture

- Strong security features

Let’s say someone visits your website from Tokyo. Instead of your app running in a US data center, it runs at the closest CloudFront location in Japan.

That saves precious milliseconds.

Use cases include:

- Dynamic content generation

- Header rewriting

- Authentication at the edge

- A/B testing with low latency

Businesses already using AWS find Lambda@Edge easy to adopt. It fits into their existing tools.

And the latency reduction can be dramatic when serving global traffic.

3. Vercel Edge Functions

Vercel is popular with front-end developers. Especially those building modern web apps.

Its Edge Functions bring serverless logic to edge locations worldwide.

Vercel focuses heavily on performance and developer experience.

Why developers love it:

- Simple deployment process

- Optimized for frontend frameworks

- Automatic global scaling

- Minimal configuration

If you’re building with modern frameworks, Vercel feels natural.

You write lightweight functions. Deploy. And they instantly run at edge locations near users.

This makes:

- Personalized pages load faster

- Geo-based content instant

- Authentication smoother

For startups and fast-growing apps, this matters a lot.

Users expect instant experiences. Vercel helps deliver that without complex infrastructure management.

Latency improvements of 40–60% are common when switching from centralized servers to edge execution.

That’s not just faster. That’s noticeable.

4. Akamai EdgeWorkers

Akamai is one of the oldest and most experienced content delivery networks in the world.

EdgeWorkers allows developers to execute JavaScript at Akamai’s edge nodes.

And Akamai has a lot of nodes.

Here’s what makes it powerful:

- Massive global footprint

- Enterprise-grade reliability

- Strong security integration

- High scalability

Large enterprises love Akamai because of its stability and scale.

EdgeWorkers can:

- Modify responses in real time

- Personalize user experiences

- Improve API acceleration

- Reduce backend server load

For industries like finance, healthcare, and media, performance and compliance are crucial.

Akamai delivers both.

By executing logic closer to users, it significantly cuts down wait times. Especially during traffic spikes.

Image not found in postmetaHow These Platforms Reduce Latency by Up to 60%

You might wonder. Where does that 60% reduction come from?

Here are the main reasons:

1. Shorter Physical Distance

Data travels at the speed of light. But light still takes time. When servers are closer, transmission time drops.

2. Fewer Network Hops

Traditional requests bounce through multiple routers and servers. Edge computing reduces those stops.

3. Smarter Caching

Frequently requested content stays at the edge. No need to fetch it from origin servers.

4. Localized Processing

Instead of asking a central server to think, the edge server thinks locally.

Together, these improvements add up.

Less travel. Less waiting. Faster results.

Who Benefits the Most?

Not every app needs edge computing. But many do.

It shines in:

- E-commerce – Faster checkout means more sales.

- Streaming platforms – Less buffering keeps viewers watching.

- Online gaming – Lower ping improves gameplay.

- SaaS apps – Snappy dashboards increase productivity.

- AI tools – Real-time responses feel magical.

If your users are global, edge computing is almost a must.

Things to Consider Before Choosing

Each platform has strengths.

Ask yourself:

- Are you already using AWS?

- Do you want developer simplicity?

- Do you handle enterprise-scale traffic?

- Is security your top concern?

Your answers guide your choice.

Also consider:

- Pricing structure

- Supported programming languages

- Deployment workflow

- Monitoring tools

Edge computing is powerful. But it should fit your existing stack.

The Future Is Closer Than You Think

The web is moving toward distributed systems.

Users expect instant experiences. Everywhere.

Edge computing is not just a trend. It is becoming the default way to build modern applications.

As AI, IoT devices, and real-time apps grow, centralized servers alone cannot keep up.

Processing needs to happen closer to where data is created.

That’s the edge.

Final Thoughts

Speed wins online.

Edge platforms like Fastly Compute, AWS Lambda@Edge, Vercel Edge Functions, and Akamai EdgeWorkers help businesses cut latency by up to 60%.

They bring code closer to users. They reduce travel time. They improve experiences.

And best of all? They let developers do this without managing complex hardware.

The internet feels instant when done right.

Edge computing is how we get there.