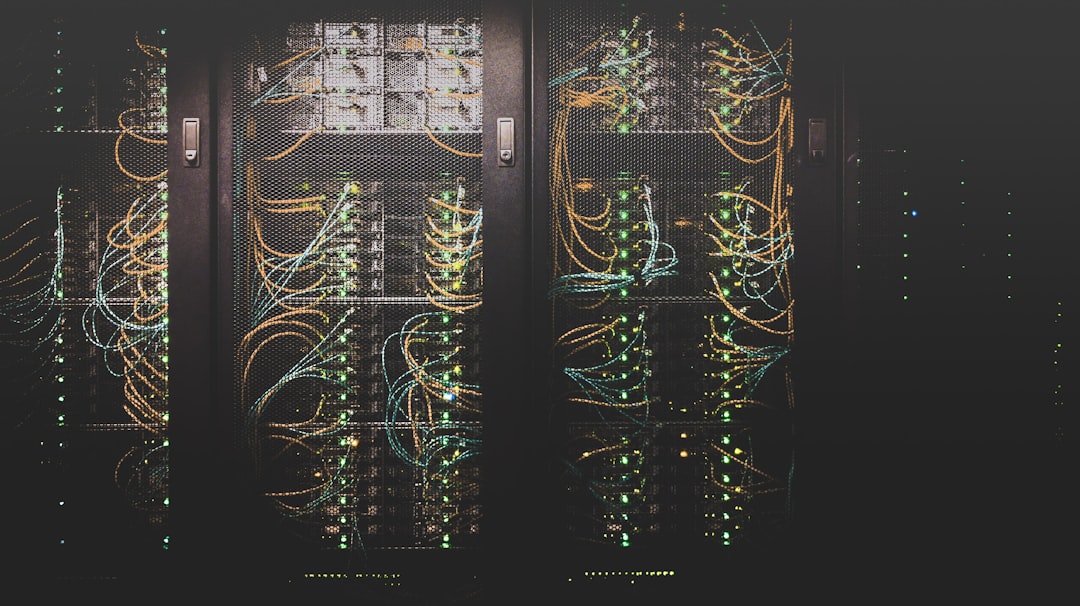

Function-as-a-Service platforms have become a practical foundation for teams that need applications to respond quickly to unpredictable traffic without managing fleets of servers. Under heavy load, these platforms can create many isolated function instances, route events to them, and reduce capacity when demand falls. The result is a model that can be cost-efficient, resilient, and operationally simpler, provided the platform’s limits, cold-start behavior, and integration patterns are understood.

TLDR: The strongest Function-as-a-Service platforms for automatic scaling include AWS Lambda, Google Cloud Functions, Azure Functions, Cloudflare Workers, IBM Cloud Code Engine, Oracle Functions, and Knative. Each can scale under heavy load, but they differ in latency, pricing, runtime support, deployment model, and operational control. For most organizations, the best choice depends less on raw scaling capability and more on ecosystem fit, traffic shape, compliance requirements, and observability needs.

Table of Contents

What Automatic Scaling Means in FaaS

In a traditional server-based application, engineers estimate peak demand, provision machines, configure load balancers, and expand capacity before traffic arrives. A Function-as-a-Service, or FaaS, platform changes that model. Developers deploy small units of code that run in response to events such as HTTP requests, queue messages, database changes, file uploads, or scheduled jobs.

When incoming demand rises, the platform automatically starts additional function instances. When demand declines, it removes idle instances. This behavior is useful during product launches, seasonal spikes, media exposure, payment-processing bursts, and background jobs that arrive in waves.

However, automatic scaling is not unlimited scaling. Every platform has boundaries: regional quotas, concurrency limits, execution duration limits, memory ceilings, network restrictions, and downstream service bottlenecks. A serious FaaS strategy looks beyond the marketing promise and evaluates how the entire system behaves under load.

1. AWS Lambda

AWS Lambda remains the reference point for FaaS platforms. It integrates deeply with the AWS ecosystem, including Amazon API Gateway, EventBridge, S3, DynamoDB, SQS, SNS, Kinesis, Step Functions, and many monitoring tools. For teams already invested in AWS, Lambda is often the most natural option.

Lambda scales by running additional function instances as events arrive. For asynchronous workloads, it can absorb large bursts and process them according to service quotas and concurrency settings. For synchronous APIs, scaling depends on concurrency availability, API Gateway configuration, and the behavior of any downstream databases or services.

- Best for: event-driven systems, AWS-native applications, background processing, APIs, automation, file processing.

- Scaling strength: mature concurrency controls, provisioned concurrency, strong event-source integrations.

- Important caution: poorly controlled scaling can overwhelm databases, third-party APIs, or internal services.

Lambda is especially strong when paired with queues. By placing Amazon SQS or Kinesis between the producer and the function, teams can smooth traffic spikes and protect downstream systems. For latency-sensitive applications, Provisioned Concurrency can reduce cold starts, though it adds cost.

2. Google Cloud Functions

Google Cloud Functions is Google Cloud’s managed FaaS platform. It supports HTTP-triggered functions and event-driven workloads using services such as Cloud Storage, Pub/Sub, Firestore, and Eventarc. It is a strong choice for teams working with Google Cloud data services, Firebase applications, analytics pipelines, and lightweight APIs.

Google Cloud Functions can automatically create new instances as request volume grows. In newer generations, it benefits from infrastructure closely related to Cloud Run, offering improved performance controls, concurrency options, and broader runtime flexibility.

- Best for: Google Cloud applications, Firebase backends, Pub/Sub processing, lightweight APIs.

- Scaling strength: good event integration and improved concurrency behavior in newer versions.

- Important caution: configuration choices such as maximum instances and concurrency strongly affect performance and cost.

For heavy load, developers should pay close attention to maximum instance settings. These settings are valuable because they prevent runaway cost and protect backend systems, but they can also throttle throughput if set too low. As with every FaaS platform, testing with realistic payloads is essential.

3. Azure Functions

Azure Functions is Microsoft’s serverless compute platform and is particularly attractive for organizations using Azure, Microsoft identity services, .NET, SQL Server, Event Hubs, Service Bus, Cosmos DB, or Microsoft 365 integrations. It supports multiple hosting plans, which gives teams more control over scaling behavior, cost, and performance guarantees.

Azure Functions can scale automatically under heavy load, especially when running on the Consumption Plan or Premium Plan. The Premium Plan is important for production systems with stricter latency requirements because it supports pre-warmed instances and avoids some cold-start concerns.

- Best for: enterprise workloads, Microsoft ecosystems, integration services, event processing, .NET applications.

- Scaling strength: flexible hosting plans, strong integration with Azure messaging and data services.

- Important caution: the choice of plan has a major impact on cold starts, scaling speed, and predictable cost.

Azure Functions is often used in business-critical workflows because it fits naturally with Azure’s identity, governance, and monitoring capabilities. When designing for heavy load, teams should consider durable workflows, queue-based processing, and careful configuration of host settings.

4. Cloudflare Workers

Cloudflare Workers differs from many traditional FaaS platforms because it runs code at Cloudflare’s global edge network rather than only in centralized cloud regions. This makes it highly effective for low-latency HTTP workloads, request routing, authentication checks, personalization, A/B testing, bot filtering, and lightweight API logic close to users.

Under heavy traffic, Cloudflare Workers can scale across a very large distributed network. Because functions execute near the user, they can reduce latency and offload traffic from origin infrastructure. This is especially useful for globally distributed applications that receive unpredictable request spikes.

- Best for: edge APIs, request transformation, global low-latency workloads, security logic, content personalization.

- Scaling strength: broad edge distribution and rapid handling of high HTTP request volume.

- Important caution: execution model, runtime APIs, and limits differ from traditional cloud functions.

Cloudflare Workers is not always the right choice for long-running compute jobs or workloads tightly coupled to a major cloud provider’s internal services. But for edge-first traffic handling, it is one of the most compelling platforms available.

5. IBM Cloud Code Engine

IBM Cloud Code Engine is a fully managed serverless platform that can run container images, jobs, and functions. It is built with a focus on abstraction: developers bring code or containers, while the platform handles infrastructure provisioning, scaling, and operations. This makes it suitable for organizations that want serverless behavior without being limited to a narrow function packaging model.

For heavy load, Code Engine can automatically scale applications based on incoming requests and workload demand. It is particularly useful when teams want to deploy containerized workloads while still benefiting from scale-to-zero economics and automatic capacity management.

- Best for: containerized serverless applications, batch jobs, enterprise workloads, teams using IBM Cloud.

- Scaling strength: supports both function-style and container-style serverless deployment.

- Important caution: ecosystem breadth and regional availability should be evaluated against application needs.

Code Engine is a serious option for enterprises that prefer container portability but want to avoid managing Kubernetes directly. It can also serve teams that need to run event-driven jobs, APIs, and task-based workloads on a common managed platform.

6. Oracle Functions

Oracle Functions is Oracle Cloud Infrastructure’s managed FaaS offering. It is based on the open-source Fn Project and is designed for event-driven workloads inside OCI. It integrates with Oracle services such as Object Storage, Events, API Gateway, Streaming, and Notifications.

For organizations running Oracle databases, enterprise applications, or regulated systems on OCI, Oracle Functions can provide an effective way to add automation and event-driven processing without operating servers. It can scale automatically as requests and events increase, subject to configuration and service limits.

- Best for: OCI-native workloads, Oracle database adjacent processes, event automation, enterprise integration.

- Scaling strength: useful integration with Oracle Cloud services and container-based function packaging.

- Important caution: it is most compelling when the broader application estate is already on OCI.

Oracle Functions is not usually selected as a standalone serverless platform by teams outside the Oracle ecosystem. But within OCI-heavy environments, it can be a practical and governed choice for scaling event-driven tasks.

7. Knative

Knative is not a single commercial FaaS provider. It is an open-source serverless platform that runs on Kubernetes and provides capabilities such as request-based autoscaling, eventing, and scale-to-zero. It is used directly by some organizations and also influences managed platforms, including services based on Kubernetes serverless patterns.

Knative is attractive when organizations want the operational model of serverless but need more control over infrastructure, networking, compliance, or deployment environments. It can scale services up under heavy load and scale them down when idle, using Kubernetes as the underlying control plane.

- Best for: platform engineering teams, Kubernetes-native organizations, hybrid cloud, regulated environments.

- Scaling strength: flexible autoscaling, portability, and control over infrastructure.

- Important caution: it requires more operational maturity than fully managed FaaS services.

Knative can be powerful, but it is not “hands-off” in the same way as AWS Lambda or Azure Functions. Teams must operate Kubernetes reliably, configure autoscaling behavior, manage observability, and secure the platform. In exchange, they gain portability and architectural control.

Key Factors When Choosing a FaaS Platform

Choosing a FaaS platform should involve more than comparing invocation prices. Heavy-load performance depends on architecture, limits, integrations, runtime behavior, and operational practices.

- Cold starts: Functions may take longer to respond when new instances are created. Provisioned or pre-warmed capacity can help.

- Concurrency limits: Platforms impose account, region, function, or instance limits that must be understood before launch.

- Downstream capacity: Databases, caches, APIs, and message brokers must be able to handle the surge produced by auto scaling.

- Event source behavior: Queues and streams can buffer demand, while direct HTTP traffic may expose users to latency or throttling.

- Observability: Logs, metrics, traces, and alerts are essential for diagnosing failures under load.

- Cost controls: Automatic scaling can reduce idle waste, but runaway traffic or retry storms can increase expense quickly.

Best Practices for Heavy-Load Serverless Systems

A reliable FaaS application is designed with controlled scaling in mind. The goal is not simply to allow infinite function instances; the goal is to scale safely while protecting user experience and dependent systems.

- Use queues for bursty workloads. Queue-based designs absorb spikes and let functions process work at a sustainable rate.

- Set concurrency and instance limits deliberately. Limits prevent cascading failures and unexpected bills.

- Load test before major events. Test realistic payload sizes, authentication paths, database calls, and third-party dependencies.

- Design idempotent functions. Retries are common in distributed systems, so functions should safely handle repeated events.

- Monitor end-to-end latency. Function duration alone is not enough; measure queue delay, network calls, and database response times.

- Plan for regional failure. Critical workloads may require multi-region deployment, failover strategies, and data replication.

Final Assessment

The seven platforms covered here can all scale automatically under heavy load, but they serve different priorities. AWS Lambda is the mature default for AWS-centric systems. Google Cloud Functions and Azure Functions are strong choices inside their respective cloud ecosystems. Cloudflare Workers excels at globally distributed edge workloads. IBM Cloud Code Engine and Oracle Functions are valuable for organizations aligned with those enterprise clouds. Knative is best for teams that want serverless behavior with Kubernetes-level control.

The most trustworthy approach is to treat FaaS as an architectural tool, not a universal replacement for every compute model. When functions are paired with queues, clear limits, strong monitoring, and resilient downstream services, they can handle heavy load with impressive efficiency. The right platform is the one that scales not only the code, but the entire system safely, predictably, and economically.